New tool detects AI-generated radiology reports

The study uses a 14,000-report dataset to train a model to detect synthetic medical text.

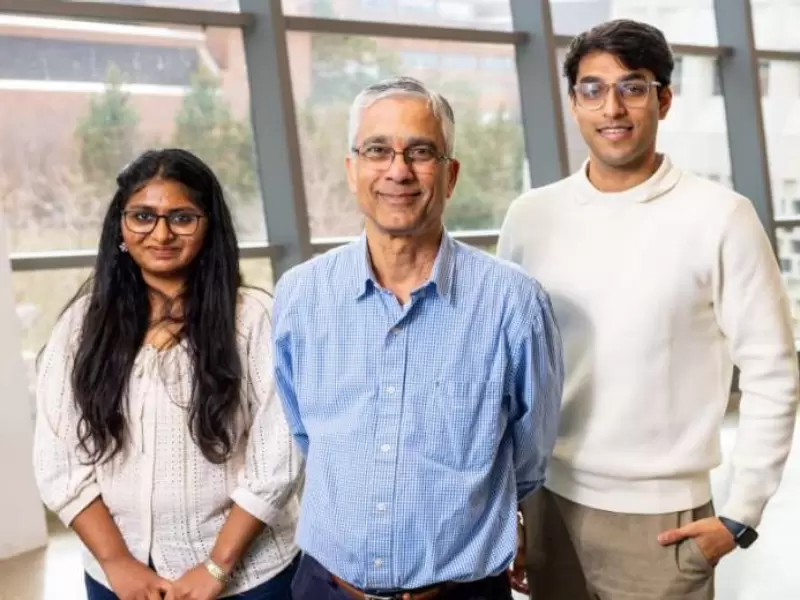

News Releases News Topics Media Advisories University Statements UB researchers developing tool to spot AI‑generated radiology reports From left to right, Tanvi Ranga, Nalini Ratha and Arjun Ramesh Kaushik. Credit: Meredith Forrest-Kulwicki, University at Buffalo. From left to right, Tanvi Ranga, Nalini Ratha and Arjun Ramesh Kaushik. / Credit: Meredith Forrest-Kulwicki, University at Buffalo.

News Releases News Topics Media Advisories University Statements UB researchers developing tool to spot AI‑generated radiology reports From left to right, Tanvi Ranga, Nalini Ratha and Arjun Ramesh Kaushik. Credit: Meredith Forrest-Kulwicki, University at Buffalo. From left to right, Tanvi Ranga, Nalini Ratha and Arjun Ramesh Kaushik. / Credit: Meredith Forrest-Kulwicki, University at Buffalo.

A new artificial intelligence tool can detect AI-generated radiology reports, addressing risks of falsified medical documentation and fraudulent insurance claims.

The tool, developed by Indian-origin researchers at the University at Buffalo, is trained to distinguish between clinician-written reports and those generated by AI systems.

Also Read: Yamini Rangan to give commencement speech at UC Berkeley Hass

The work is part of a study titled Detecting Synthetic Radiology Reports Using Style Disentanglement, presented at the 2025 GenAI4Health workshop during the Conference on Neural Information Processing Systems in San Diego.

As part of the study, the team created what it describes as a first-of-its-kind dataset of 14,000 paired chest X-ray reports, combining radiologist-authored reports with synthetic versions.

The AI-generated reports were produced using two approaches: text-to-text generation, which paraphrases existing reports using large language models, and image-to-text generation, which produces full reports directly from radiographic images using medical vision-language models.

The dataset integrates both text-based and image-based synthetic reports and focuses on the findings section, which contains detailed clinical observations and domain-specific terminology.

The detection system is based on a BERT–Mamba architecture designed to separate stylistic features from clinical content. While AI systems can replicate medical terminology, the model identifies differences in phrasing, punctuation, and word choice to detect synthetic text.

The system achieved Matthews correlation coefficient scores ranging from 92 percent to 100 percent across both text-to-text and image-to-text categories, a metric used to evaluate performance across complex datasets.

Detection accuracy exceeded 99 percent in text-based scenarios, even when AI-generated reports closely resembled original versions. The model also demonstrated the ability to identify AI-generated reports from systems it had not previously encountered.

The researchers found that AI-generated reports tend to use more elaborate and polished language, while clinicians typically use concise and direct terminology, creating measurable stylistic differences that the model can detect.

The team is continuing to refine the dataset and detection framework, including fine-tuning vision-language models for improved clinical alignment and expanding the dataset to additional radiology categories with a broader range of AI systems.

The dataset is also expected to serve as a controlled testbed for evaluating the reliability and safe deployment of AI-generated clinical narratives.

While the study focuses on radiology, the researchers said the style-based detection approach could be applied across sectors vulnerable to AI-generated forgeries, including insurance, finance, journalism, education and the legal profession.

The tool was developed by a research team led by Nalini Ratha, along with doctoral researchers Arjun Ramesh Kaushik and Tanvi Ranga.

Discover more stories on NewIndiaAbroad

ADVERTISEMENT

ADVERTISEMENT

E Paper

Video

Malvika Choudhary

Malvika Choudhary

.jpeg)

Comments

Start the conversation

Become a member of New India Abroad to start commenting.

Sign Up Now

Already have an account? Login